2020

Happy Holidays! If you haven’t read our May 2020 family update, give it a read! This year has been quite a tumultuous one, as we’ve all lived through the many …

2020 Read MoreTechnologist

Happy Holidays! If you haven’t read our May 2020 family update, give it a read! This year has been quite a tumultuous one, as we’ve all lived through the many …

2020 Read More

Wow, 2020 has been… a year, hasn’t it? My heart goes out to everyone that has lost loved ones due to this horrible virus, to the folks struggling due to …

What’s new for us in pandemic times Read More

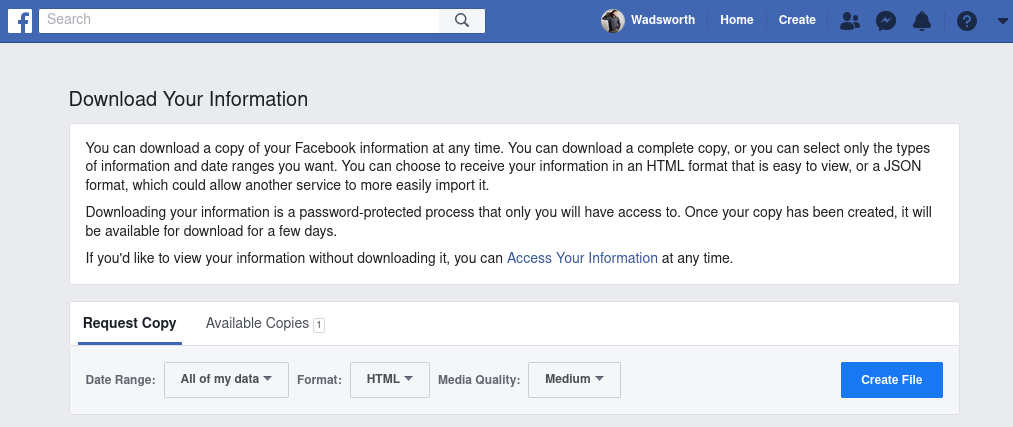

Facebook, as a company, has repeatedly violated their users’ trust and engaged in unethical, hostile behaviors towards user privacy and data custody. A small subset of examples include:– The Cambridge …

How to #DeleteFacebook Read More